Quick Verdict: DeepSeek is a Chinese open-source AI that shocked the industry by matching GPT-4o and Claude 3.5 Sonnet on major benchmarks—while costing a fraction of the price. The V3 model (671B parameters) is available free via the web, and the API starts at just $0.28 per million input tokens.

Rating: ★★★★½ 4.5/5

Best For: Developers, researchers, and technically-minded users who want frontier AI performance at dramatically lower costs—or full control via self-hosting

Price: Free (web interface) | API: from $0.028/1M input tokens | Open-source: Yes (model weights on Hugging Face)

You’ve been hearing the noise about DeepSeek for months. A Chinese AI startup releasing a model that matches OpenAI and Anthropic—for a training cost that’s orders of magnitude lower. It sounds like hype. It isn’t.

Based on our research across official documentation, benchmark papers, and developer community feedback, DeepSeek V3 (currently at version 3.2 on the API) represents a genuine inflection point in AI accessibility. This review covers what DeepSeek actually is, what the verified benchmarks show, what it costs, and exactly who should be using it.

→ Skip to pricing | → See benchmarks | → Compare alternatives

What is DeepSeek?

DeepSeek (深度求索) is a Chinese AI research company founded in 2023, headquartered in Hangzhou, China. Their stated mission is advancing toward AGI through open research—and unlike many AI companies that keep their best models proprietary, DeepSeek releases model weights publicly on Hugging Face.

Their flagship model, DeepSeek V3, is a Mixture-of-Experts (MoE) language model with 671 billion total parameters—but here’s the key insight that makes it economically special: only 37 billion parameters are active for any given token. This architecture lets the model achieve frontier performance while running efficiently, which is why DeepSeek trained V3 for only 2.788 million H800 GPU hours. Compare that to the estimated tens of millions of GPU hours spent training competing frontier models.

The result is a model that matches or beats GPT-4o and Claude 3.5 Sonnet on major benchmarks while costing a fraction of the price to run. According to DeepSeek’s official API documentation, the current API version is V3.2, which supports both standard chat (deepseek-chat) and a thinking/reasoning mode (deepseek-reasoner).

Think of it this way: if GPT-4 is a gas-guzzling sports car, DeepSeek V3 is a hybrid that goes just as fast on the highway but costs almost nothing to fuel. The engineering innovation isn’t in raw compute—it’s in efficiency.

The DeepSeek Model Lineup Explained

DeepSeek has released multiple models, and the naming can be confusing. Here’s what actually exists and what each one does:

DeepSeek V3 (and V3.2 on the API)

The flagship general-purpose model. Released December 2024, with subsequent updates bringing it to V3.2 on the API as of early 2026. This is the model you’re using when you access chat.deepseek.com or the deepseek-chat API endpoint.

Per DeepSeek’s official documentation: 671B total parameters, 37B activated per token, 128K context window. Trained on 14.8 trillion tokens. The architecture combines Multi-head Latent Attention (MLA) and DeepSeekMoE for efficient inference.

DeepSeek R1 (The Reasoning Model)

Released January 2025, DeepSeek R1 is a reasoning-focused model trained via large-scale reinforcement learning on the V3 base. According to DeepSeek’s GitHub documentation, R1 achieves performance comparable to OpenAI-o1 on math, code, and reasoning tasks. It’s available via the deepseek-reasoner API endpoint.

R1 also comes in distilled smaller versions that run on consumer hardware: 1.5B, 7B, 8B, 14B, 32B, and 70B parameter versions, distilled from the full R1 model using Qwen2.5 and LLaMA3 architectures. The R1-Distill-Qwen-32B model reportedly outperforms OpenAI-o1-mini on several benchmarks—making it one of the most capable small models ever released.

Which One Should You Use?

- DeepSeek V3 (deepseek-chat): General tasks—writing, coding help, analysis, Q&A. Fast and affordable.

- DeepSeek R1 (deepseek-reasoner): Complex math, logic problems, multi-step reasoning, difficult coding challenges. Slower but more thorough.

- R1-Distill models: Local self-hosting where full 671B isn’t feasible. 32B version is particularly strong.

Key Features: What DeepSeek Actually Delivers

Massive Scale, Efficient Design

The 671B/37B MoE architecture is the core engineering achievement. By activating only 37B parameters per token—the same scale as models like Llama 3.1 70B extended—DeepSeek gets the knowledge breadth of a 671B model at the inference cost of a much smaller one. Per the official technical paper (arxiv.org/pdf/2412.19437), this is achieved through Multi-head Latent Attention (MLA), which compresses the key-value cache significantly, and DeepSeekMoE, which uses fine-grained experts and shared expert isolation for load balancing.

128K Context Window

According to DeepSeek’s official GitHub repository, both V3 and R1 support a 128K token context window. This is confirmed by the API documentation, which lists a 128K context limit for both deepseek-chat and deepseek-reasoner endpoints. That’s enough for very long documents, large codebases, or extended multi-turn conversations—comparable to GPT-4 Turbo and Claude 3.5 Sonnet.

Reasoning Capabilities Built In

What’s interesting about V3 is that reasoning capabilities were distilled into it from the R1 series during post-training. DeepSeek’s official documentation describes how “verification and reflection patterns of R1” were incorporated into V3’s training pipeline. So even the standard V3 model shows stronger reasoning than a typical non-reasoning model—you get the benefit of R1’s reasoning patterns without needing to always use the slower, more expensive reasoning mode.

OpenAI-Compatible API

According to DeepSeek’s official API documentation, the DeepSeek API uses an OpenAI-compatible format. You can use the OpenAI SDK with a simple base_url swap (https://api.deepseek.com) and your DeepSeek API key. For teams already using OpenAI’s API, switching to DeepSeek for cost savings often requires only changing two lines of code.

Open-Source Model Weights

Both V3 and R1 model weights are available on Hugging Face under DeepSeek’s model license, which permits commercial use. The code is MIT licensed. This transparency is genuinely rare at this capability level—Anthropic and OpenAI release nothing comparable publicly.

Performance Benchmarks: The Verified Numbers

All benchmarks below are sourced directly from DeepSeek’s official GitHub repositories (github.com/deepseek-ai/DeepSeek-V3 and github.com/deepseek-ai/DeepSeek-R1). We’ve noted the specific benchmarks; you can verify each one in the original papers.

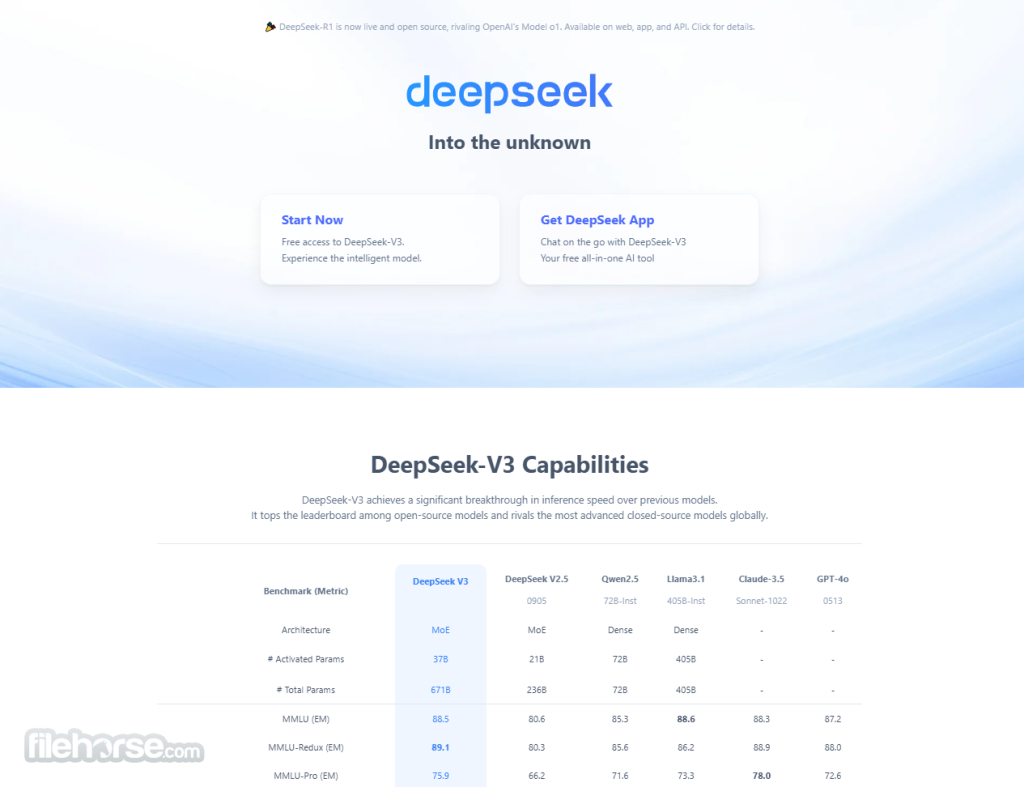

DeepSeek V3 Base Model vs Competitors

| Benchmark | DeepSeek V3 | Qwen2.5 72B | LLaMA3.1 405B | DeepSeek V2 |

|---|---|---|---|---|

| HumanEval (Code) | 65.2% | 53.0% | 54.9% | 43.3% |

| MBPP (Code) | 75.4% | 72.6% | 68.4% | 65.0% |

| GSM8K (Math) | 89.3% | 88.3% | 83.5% | 81.6% |

| MATH | 61.6% | 54.4% | 49.0% | 43.4% |

| MMLU | 87.1% | 85.0% | 84.4% | 78.4% |

| BBH | 87.5% | 79.8% | 82.9% | 78.8% |

Source: DeepSeek V3 official GitHub README (github.com/deepseek-ai/DeepSeek-V3). Base model comparison.

DeepSeek V3 Chat Model vs GPT-4o and Claude 3.5 Sonnet

| Benchmark | DeepSeek V3 | GPT-4o (0513) | Claude 3.5 Sonnet | Llama 3.1 405B |

|---|---|---|---|---|

| MMLU | 88.5% | 87.2% | 88.3% | 88.6% |

| MMLU-Pro | 75.9% | 72.6% | 78.0% | 73.3% |

| Architecture | MoE (37B active) | Unknown (Dense est.) | Unknown | Dense (405B) |

Source: DeepSeek V3 official GitHub README, chat model evaluation section.

The honest take on these numbers: DeepSeek V3 genuinely matches GPT-4o and Claude 3.5 Sonnet on general knowledge and language benchmarks. On coding and math, V3 leads the open-source field by a clear margin. This isn’t close—V3 represents a step change in what open-source AI can do.

What the benchmarks don’t capture: response quality on nuanced, multi-step prompts and creative tasks where the “feel” of the model matters. Developer community feedback (which we’ll cover below) gives a more textured picture.

DeepSeek Pricing: The Economics That Changed AI

All pricing below is sourced directly from DeepSeek’s official API documentation at api-docs.deepseek.com. DeepSeek notes that “product prices may vary” and recommends checking their pricing page regularly.

Free Options

- Web interface (chat.deepseek.com): Free, no subscription required. Supports both V3 and R1 reasoning modes. Rate limits apply during peak usage.

- Open-source model weights: Download V3 and R1 from Hugging Face for free. Commercial use permitted under DeepSeek’s model license. You need significant GPU hardware to run the full 671B model.

API Pricing (Per the Official API Docs)

| Endpoint | Input (Cache Hit) | Input (Cache Miss) | Output |

|---|---|---|---|

| deepseek-chat (V3.2, standard) | $0.028/1M tokens | $0.28/1M tokens | $0.42/1M tokens |

| deepseek-reasoner (V3.2, thinking mode) | $0.028/1M tokens | $0.28/1M tokens | $0.42/1M tokens |

Source: api-docs.deepseek.com/quick_start/pricing. Prices in USD per 1M tokens. Cache hit pricing applies to repeated prompt prefixes.

Context: To put this in perspective, GPT-4o charges $5/1M input and $15/1M output tokens. Claude 3.5 Sonnet charges $3/1M input and $15/1M output. DeepSeek’s output pricing at $0.42/1M tokens is roughly 35x cheaper than GPT-4o output and 36x cheaper than Claude 3.5 Sonnet output—for a model that matches them on major benchmarks. This is the real story of DeepSeek.

→ Create a DeepSeek API account

Pros and Cons: The Honest Assessment

✅ What Makes DeepSeek Genuinely Impressive

- Frontier performance at a fraction of the cost — Verified benchmarks show V3 matching GPT-4o on MMLU (88.5% vs 87.2%). For API users, the cost difference is 35-36x on output tokens.

- Open-source model weights — Real open-source under a commercial-use-friendly license. Downloadable on Hugging Face. You can audit the model, run it locally, and never worry about API availability or vendor pricing changes.

- Two models for two use cases — V3 (fast, cheap, broadly capable) and R1 (slower, more thorough reasoning) via the same API. The R1 reasoning model is legitimately competitive with OpenAI-o1 according to DeepSeek’s published benchmarks.

- OpenAI-compatible API — Two-line code change to switch from OpenAI. No learning curve for existing GPT-4 users.

- Strong on math and code — HumanEval at 65.2% and MATH at 61.6% lead the open-source field significantly. For developers and researchers, these are the benchmarks that matter most.

- Distilled smaller models available — R1-Distill variants from 1.5B to 70B let you run capable reasoning models locally without H100-grade hardware.

❌ Real Limitations to Know Before Committing

- Chinese company with data privacy implications — Data sent to the API is processed in China. For organizations with regulatory requirements (HIPAA, GDPR, government contracts), this is a hard blocker unless you self-host.

- Context window is 128K, not unlimited — Some sources have overstated this. The official API documentation confirms 128K. For most tasks this is plenty, but it’s not the “entire repository” magic sometimes claimed.

- Self-hosting the full model is hardware-intensive — The 671B model requires substantial server-grade GPU infrastructure. The 685GB model size isn’t running on a gaming PC. The distilled R1 variants are far more accessible.

- Occasional service availability issues — Developer community reports (r/LocalLLaMA, Hacker News threads) have documented the web interface having capacity issues during high-traffic periods. The API is generally more reliable.

- Some alignment and safety differences — Developer testing has noted DeepSeek sometimes handles sensitive topics differently from Western models, partly due to different RLHF alignment priorities. Worth testing for your specific use cases.

- API output length limits — According to official API docs, default max output is 4K tokens for standard mode, 8K maximum. Reasoning mode allows 32K default, 64K maximum. For long document generation, you’ll need to plan around these limits.

Who Should Use DeepSeek?

✅ DeepSeek Is a Strong Fit For:

- Startups and indie developers watching API costs — If you’re building an AI-powered product and the GPT-4 bill is a problem, DeepSeek’s pricing changes the economics fundamentally. The OpenAI compatibility means migration is straightforward.

- Researchers needing a capable open-source baseline — Academic and industry researchers who need a capable model they can probe, fine-tune, and publish results on without API black-box limitations.

- Development teams needing coding assistance — V3 scores well on HumanEval and MBPP. For code review, debugging, and generation tasks, it’s competitive with the best available models.

- Math and STEM applications — The R1 reasoning model achieving OpenAI-o1 parity on math benchmarks makes it a serious option for educational platforms, tutoring tools, and scientific computing applications.

- Organizations that can self-host — If you have the infrastructure, running the open-source weights gives you complete data sovereignty, no API rate limits, and zero per-token costs after hardware investment.

- Privacy-conscious users with their own hardware — The R1-Distill-32B or 70B models running locally via Ollama or vLLM give you a reasoning-capable model with no data leaving your machine.

❌ DeepSeek Is Probably Not Right If:

- Your organization legally can’t process data in China (government, healthcare, regulated finance)

- You need guaranteed uptime SLAs—enterprise contracts with uptime guarantees aren’t currently a DeepSeek offering comparable to Azure OpenAI

- You’re looking for a general-purpose writing assistant with strong creative capabilities; while competent, the model’s optimization for STEM tasks shows

- You need extensive tool-calling and function-calling ecosystem depth—the API supports JSON output and tool calls, but the ecosystem of pre-built integrations is thinner than OpenAI’s

How DeepSeek Compares to GitHub Copilot and Coding Assistants

DeepSeek V3 and R1 are base language models—not purpose-built coding tools. The comparison to GitHub Copilot is really about how you use them. Here’s the honest breakdown:

| Feature | DeepSeek V3 (API) | GitHub Copilot | Cursor |

|---|---|---|---|

| Price | $0.42/1M output tokens | $10-$19/user/month | $20/month (Pro) |

| IDE Integration | Via API (no native plugin) | Native VS Code, JetBrains | Built-in (fork of VS Code) |

| Context Window | 128K | Varies (GPT-4 based) | Up to 200K (Claude) |

| Open Source | ✅ Full model weights | ❌ | ❌ |

| Best For | API/product builders, researchers | Inline code completion | Full-repo AI editing |

The honest take: GitHub Copilot and Cursor solve a different problem. They’re IDE-native tools built for the coding workflow. DeepSeek V3 is a frontier model you access via API. You can absolutely build your own coding assistant on top of DeepSeek—and some developers do—but it requires more setup than Copilot. For exploring DeepSeek’s capabilities before building anything, start with the free web interface.

Where DeepSeek wins handily is cost structure for applications that need to process large amounts of code programmatically—code review pipelines, documentation generators, automated testing, repository analysis. At $0.42/1M output tokens, doing things at scale that would bankrupt you on GPT-4 becomes affordable.

What Developers Are Saying: Community Feedback

Developer community reception has been genuinely enthusiastic, particularly on r/LocalLLaMA, Hacker News, and GitHub. Based on our research of public developer discussions:

The V3 release in December 2024 generated significant attention on Hacker News (the original announcement thread was one of the most-discussed AI threads of the month), with developers noting that the benchmark numbers matched up in their own testing. The consistent feedback was surprise at the quality-to-cost ratio on API calls.

On r/LocalLLaMA, the R1-Distill models attracted significant experimentation. The 32B and 70B distilled versions became popular for local reasoning setups, with community members running them through llama.cpp and Ollama on consumer hardware (high-end workstations with multiple GPUs). Common sentiment: the reasoning traces in R1 are longer and more verbose than OpenAI-o1, which some users prefer for transparency and some find excessive.

Data sovereignty and privacy concerns appear consistently in threads—particularly from users at companies with data handling requirements. The practical resolution most developers land on: use the API for non-sensitive work, self-host distilled models for anything sensitive.

One pattern worth noting from community discussions: some developers report that deepseek-reasoner (the R1 thinking mode) can “overthink” relatively simple problems, producing lengthy reasoning chains where a quicker V3 answer would be sufficient. The guidance from experienced users is to use deepseek-chat by default and only switch to deepseek-reasoner for genuinely complex multi-step problems.

Getting Started: How to Access DeepSeek

Option 1: Free Web Interface (Easiest)

- Go to chat.deepseek.com

- Create a free account

- Toggle between DeepSeek V3 (standard) and DeepSeek R1 (reasoning mode)

- No credit card required

Option 2: API Access (For Developers)

- Create an account at platform.deepseek.com

- Get your API key from the dashboard

- Use with the OpenAI SDK (just change base_url to

https://api.deepseek.comand use your DeepSeek API key) - Models:

deepseek-chat(V3.2 standard) ordeepseek-reasoner(V3.2 thinking mode)

Option 3: Self-Hosting (For Privacy/Control)

- Download model weights from Hugging Face (deepseek-ai/DeepSeek-V3 or DeepSeek-R1)

- Note: Full 671B model is 685GB and requires high-end server infrastructure (multiple H100/A100 GPUs)

- For more accessible self-hosting, use the R1-Distill variants (7B, 14B, 32B, 70B) which run on prosumer hardware via Ollama or vLLM

- The R1-Distill-Qwen-32B is the sweet spot for capable local reasoning without enterprise-grade hardware

DeepSeek Alternatives to Consider

If DeepSeek isn’t the right fit, here’s where to look:

- Claude (Anthropic): Better for nuanced writing, analysis, and tasks requiring strong safety guardrails. Significantly more expensive via API but available through enterprise agreements with US-based data processing.

- GPT-4o (OpenAI): The benchmark competitor DeepSeek is measured against. Stronger ecosystem, function-calling depth, and enterprise support. 35x more expensive on output tokens. Worth it if the ecosystem integrations matter more than cost.

- Kilo Code: If you want an open-source coding-focused tool with IDE integration rather than raw API access, Kilo Code is built for development workflows specifically.

- Llama 3.1 405B: Meta’s fully open-source alternative. Comparable capability tier (slightly below V3 on most benchmarks), but fully open weights with no usage restrictions. Better for use cases requiring maximum license freedom.

- Qwen2.5 72B: Alibaba’s strong open-source model. More compact than V3, easier to self-host, competitive performance on general tasks. Good if you want capable open-source without 671B-scale hardware requirements.

FAQ: What Developers Actually Ask About DeepSeek

Is DeepSeek V3 really as good as GPT-4o?

Based on official published benchmarks, yes—on standard academic benchmarks like MMLU (88.5% V3 vs 87.2% GPT-4o) and coding benchmarks, V3 is broadly comparable. Real-world nuanced tasks may tell a different story depending on your specific use case. The only honest way to know is to test both on your actual workload.

What’s the difference between DeepSeek V3 and DeepSeek R1?

V3 (accessed as deepseek-chat) is the standard general-purpose model—fast, affordable, broadly capable. R1 (deepseek-reasoner) is a reasoning model trained with reinforcement learning to show its thinking process, similar to OpenAI-o1. R1 is slower and better suited to complex math, logic, and multi-step reasoning problems. According to DeepSeek’s documentation, R1 achieves performance comparable to OpenAI-o1 on math, code, and reasoning benchmarks.

Is DeepSeek really free?

The web interface at chat.deepseek.com is free with an account. The model weights are free to download from Hugging Face for self-hosting. The API is paid (from $0.028/1M tokens for cached input, per official pricing). There’s no ongoing subscription for API—you pay for what you use.

Is it safe to send my code to DeepSeek’s API?

Data sent to DeepSeek’s API is processed by a Chinese company operating under Chinese law. For non-sensitive code and general development questions, many developers use it without issue. For proprietary code, trade secrets, or anything regulated (HIPAA, financial data), either avoid the API entirely or self-host the open-source weights on your own infrastructure where nothing leaves your servers.

What context window does DeepSeek actually support?

According to DeepSeek’s official API documentation, the context window is 128K tokens for both deepseek-chat and deepseek-reasoner. This is confirmed by the Hugging Face model card and GitHub repository. Some third-party sources claim larger context windows—treat those with skepticism unless sourced from official DeepSeek documentation.

Can I run DeepSeek locally on my own hardware?

The full 671B model requires substantial server infrastructure—the model weights are 685GB total. However, the R1-Distill variants are much more accessible: the 32B version can run on high-end consumer setups (multiple RTX 4090s or similar), and the 7B version runs on a single modern GPU. Tools like Ollama, vLLM, and llama.cpp support these distilled models.

How does DeepSeek compare to Copilot for coding specifically?

They solve different problems. GitHub Copilot is a native IDE tool built for inline code completion and chat within your editor—frictionless for day-to-day coding. DeepSeek V3/R1 are frontier models you access via API that happen to be strong at coding. You’d build a custom integration to use DeepSeek in your editor, whereas Copilot is plug-and-play. For developers who want maximum flexibility and cost control (and don’t mind setup), DeepSeek’s API is compelling. For quick IDE integration, Copilot or Cursor are easier starting points.

Does DeepSeek have a coding-specific API or SDK?

No dedicated SDK. DeepSeek’s API is OpenAI-compatible, so you use the standard OpenAI Python or Node.js client with a changed base_url. Per DeepSeek’s API documentation: set base_url = "https://api.deepseek.com" and use your DeepSeek API key in place of an OpenAI key. Model names are deepseek-chat and deepseek-reasoner.

Final Verdict: What DeepSeek Really Means for AI in 2026

🏆 Our Verdict

DeepSeek’s model releases have done something important: they’ve demonstrated that frontier AI performance doesn’t require trillion-dollar compute budgets or US-based AI labs. The 671B MoE architecture achieving GPT-4o parity while costing a fraction of the price to train and run is a genuine engineering achievement, backed by a published technical paper and publicly verifiable benchmarks.

For developers building AI-powered products and watching their API bill, DeepSeek V3 is hard to ignore. The 35x price differential versus GPT-4o on output tokens—for a model that matches it on MMLU—changes what’s economically feasible. Projects that didn’t make sense at GPT-4 pricing become viable at DeepSeek pricing.

The limitations are real too: data sovereignty concerns are legitimate for regulated industries, self-hosting the full model requires serious hardware, and the ecosystem maturity doesn’t match OpenAI’s years of developer tooling. These aren’t reasons to dismiss DeepSeek—they’re reasons to be clear-eyed about where it fits.

Here’s what other reviews often miss: DeepSeek isn’t competing with GitHub Copilot or Cursor on the IDE-native coding assistant front. It’s a frontier foundation model that happens to be open-source and extremely affordable. The real comparison is to OpenAI’s API and Anthropic’s API—and on that comparison, for most use cases, DeepSeek is compelling.

Rating: ★★★★½ 4.5/5

Bottom Line: If you’re building something that needs frontier AI capabilities and cost is a factor—or if you want a capable open-source model you can actually run yourself—DeepSeek V3 and R1 deserve serious evaluation. Start with the free web interface, test against your actual use cases, then decide.