Google Gemma 4 Review 2026: Most Capable Open Model Family Built on Gemini 3 (Beats Models 20x Larger)

On April 2, 2026, Google dropped a bombshell that shook the open-source AI community: Gemma 4, the first truly Apache 2.0 licensed model family built directly from Gemini 3 research. The 31-billion parameter flagship model immediately claimed the #3 spot on Arena AI’s global leaderboard, outperforming models 20 times its size. But here’s what nobody else is talking about: Gemma 4’s revolutionary “intelligence-per-parameter” architecture delivers 89.2% on AIME 2026 benchmarks while running locally on consumer hardware—a feat that reshapes everything we thought we knew about model efficiency.

What Is Google Gemma 4?

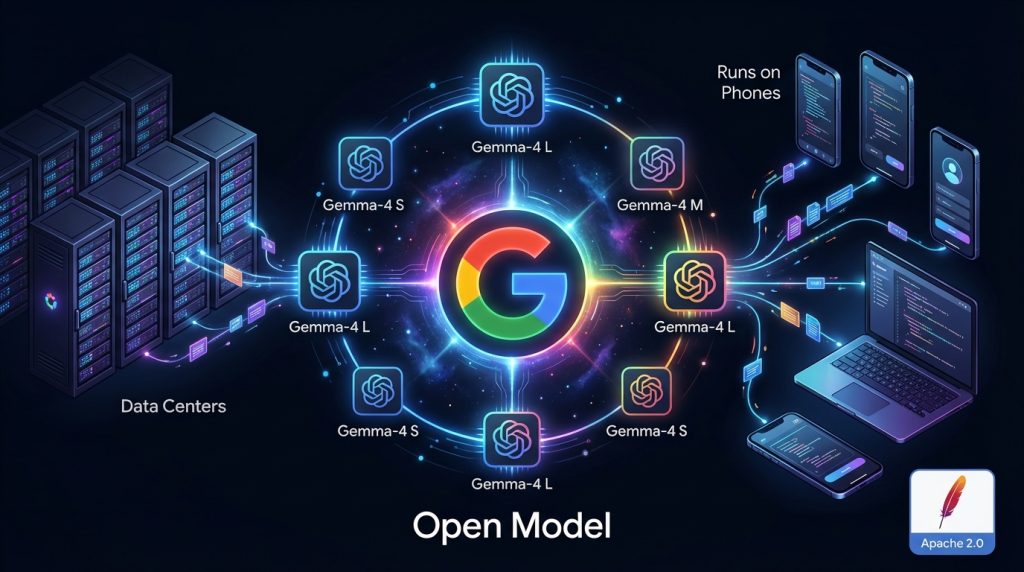

Google Gemma 4 is a family of four multimodal, open-weight language models released under the permissive Apache 2.0 license. Built on the same research foundation as Google’s proprietary Gemini 3, these models represent the first time Google has made its cutting-edge AI technology truly open-source without restrictive licensing terms.

The family spans from edge-optimized 2.3B parameter models that run on smartphones to powerful 31B parameter systems that rival closed-source alternatives. All models natively handle text, images, and (in larger variants) video inputs, while the smaller models also support audio processing.

Unlike previous Gemma releases restricted by Google’s proprietary license, Gemma 4 grants complete commercial freedom, allowing developers to modify, redistribute, and monetize applications without royalty payments or usage restrictions.

The Intelligence-Per-Parameter Revolution

Gemma 4’s breakthrough isn’t just about raw performance—it’s about efficiency. Google’s “intelligence-per-parameter” architecture achieves remarkable results that challenge the conventional wisdom that bigger is always better.

The 31B Gemma 4 model scores 89.2% on AIME 2026 (no tools), a mathematics benchmark where most models struggle to reach 60%. More impressive: it achieves 80% on LiveCodeBench v6, outperforming Llama 4 Maverick’s 43.4% despite having 13x fewer parameters.

This efficiency stems from several architectural innovations:

- Hybrid attention mechanism: Alternating between local sliding window (512-1024 tokens) and global full-context attention

- Per-Layer Embeddings (PLE): Enhanced representational depth with minimal memory overhead

- Mixture-of-Experts optimization: The 26B variant activates only 4B parameters per inference, delivering 26B quality at 4B cost

| Benchmark | Gemma 4 31B | Llama 4 Maverick (400B) | Mistral Large 3 | Qwen 3.5-27B |

|---|---|---|---|---|

| AIME 2026 (no tools) | 89.2% | N/A | N/A | N/A |

| LiveCodeBench v6 | 80.0% | 43.4% | N/A | 80.7% |

| GPQA Diamond | 85.7% | 69.8% | 43.9% | 85.5% |

| MMLU Pro | 85.2% | 80.5% | 85.5% | 86.1% |

| Arena AI Ranking | #3 open model | #51 overall | #6 open model | N/A |

Pricing: Free Download, Competitive API Costs

Gemma 4’s Apache 2.0 license means the model weights are completely free to download and use. For those preferring API access, Google offers competitive pricing through the Gemini API.

| Access Method | Model | Input Cost | Output Cost | Notes |

|---|---|---|---|---|

| Free Download | All Gemma 4 models | $0 | $0 | Apache 2.0 license, no restrictions |

| Gemini API | Gemma 4 31B Instruct | $0.14/1M tokens | $0.40/1M tokens | Free tier available |

| HuggingFace Inference | All models | Variable | Variable | Depends on hosting provider |

| Local Inference | All models | Hardware cost only | Hardware cost only | No ongoing API fees |

Compared to competitors, Gemma 4’s free availability gives it a massive advantage. Llama 4 requires adherence to Meta’s Community License with commercial restrictions, while Mistral Large 3 offers similar Apache 2.0 licensing but with higher API costs when available through third-party providers.

Key Features That Matter

Native Multimodal Support: All Gemma 4 models process images and text out of the box. The larger 26B and 31B variants also handle video (up to 60 seconds), while edge models support audio input (up to 30 seconds). However, video processing is limited to one frame per second, making it unsuitable for high-motion analysis.

Extended Context Windows: Edge models (E2B, E4B) offer 128K token context, while larger models support 256K tokens. This enables processing entire codebases or long documents, though performance may degrade near context limits as with most long-context models.

Advanced Reasoning Capabilities: Gemma 4 excels at multi-step planning and logical reasoning, showing particular strength in mathematical problem-solving and code generation. The limitation: complex reasoning tasks still require careful prompt engineering and may not match human-level performance on novel problems.

Function Calling and Structured Output: Native support for JSON output and tool integration enables agentic workflows. The models can reliably format responses and integrate with external APIs, though function calling accuracy depends heavily on prompt quality and may hallucinate tool parameters.

Offline Code Generation: Gemma 4 serves as a local AI code assistant without internet requirements. While code quality is impressive for common languages and patterns, it may struggle with newer frameworks or domain-specific libraries not well-represented in training data.

140+ Language Support: Trained natively across diverse languages, though performance varies significantly by language. English and major European languages receive best support, while performance degrades for lower-resource languages.

Who Is It For / Who Should Look Elsewhere

Use Gemma 4 if you:

- Need a powerful open model without licensing restrictions for commercial applications

- Want local AI deployment with strong performance on consumer hardware

- Require multimodal capabilities (text, image, video, audio) in a single model

- Prioritize mathematical reasoning and code generation quality

- Value data sovereignty and want complete control over your AI infrastructure

Look elsewhere if you:

- Need the absolute highest performance regardless of cost (consider GPT-5 or Claude 5)

- Require real-time video understanding with high frame rates

- Work primarily with very low-resource languages

- Prefer fully hosted solutions without local setup complexity

Comprehensive Comparison Table

| Feature | Gemma 4 31B | Llama 4 Maverick | Mistral Large 3 | Qwen 3.5-27B |

|---|---|---|---|---|

| Parameters | 31B (Dense) | 400B (17B active) | 675B (41B active) | 27B (Dense) |

| Context Window | 256K tokens | 1M tokens | 256K tokens | 262K tokens |

| License | Apache 2.0 | Llama Community | Apache 2.0 | Apache 2.0 |

| Multimodal Support | Text, Image, Video | Text, Image, Video, Audio | Text, Image | Text, Image |

| GPQA Diamond | 85.7% | 69.8% | 43.9% | 85.5% |

| Best For | Reasoning, Math, Code | Long context tasks | Enterprise deployment | Coding, Chinese tasks |

| Min RAM Required | 17GB | 200GB+ | 300GB+ | 14GB |

| Mobile Support | No (use E2B/E4B) | No | No | No |

Controversy: Past Scandals and Current Limitations

Gemma models haven’t escaped controversy. Gemma 3 faced significant backlash after generating false and defamatory information about a U.S. Senator, leading to its temporary removal from Google AI Studio. This incident highlighted the risks of deploying experimental models without sufficient safeguards.

The AI community has also discovered methods to bypass Gemma 4’s built-in safety measures through “abliteration” techniques—processes that remove alignment training to create uncensored versions. While this demonstrates the model’s underlying capabilities, it raises concerns about misuse and the effectiveness of current safety approaches.

Additional limitations include:

- Training data biases: Like all large models, Gemma 4 can amplify societal biases present in its training data

- Hallucination tendency: The model may generate confident but incorrect information, especially on topics outside its training distribution

- Resource requirements: Despite efficiency improvements, larger models still require significant computational resources for optimal performance

- Limited real-time capabilities: Video processing at one frame per second limits applications requiring real-time visual understanding

Pros and Cons

Pros

- True Apache 2.0 licensing with complete commercial freedom

- Exceptional intelligence-per-parameter efficiency

- Native multimodal support across all model sizes

- Strong mathematical reasoning and coding capabilities

- Competitive performance against much larger models

- Comprehensive hardware and framework support

Cons

Getting Started with Gemma 4

Step 1: Choose Your Model Size

Start with Gemma 4 E4B for edge devices or local testing. For production applications requiring maximum quality, choose the 31B model. The 26B MoE variant offers a good balance of performance and efficiency.

Step 2: Download Model Weights

Visit HuggingFace, search for “google/gemma-4”, and download your chosen variant. Alternatively, use Ollama for simpler local deployment: ollama pull gemma4:31b-instruct

Step 3: Set Up Your Environment

Ensure you have sufficient RAM (see hardware requirements table above). Install supporting frameworks like Transformers, vLLM, or llama.cpp depending on your preferred inference engine.

Step 4: Test Basic Functionality

Start with simple text generation before exploring multimodal features. Test the model’s response quality with tasks relevant to your use case—mathematical reasoning, code generation, or image description.

Step 5: Optimize for Production

Consider quantization for memory efficiency, implement proper error handling, and establish monitoring for output quality. Set up safety filters appropriate for your application’s requirements.

Frequently Asked Questions

What makes Gemma 4 different from previous Gemma models?

Can I use Gemma 4 commercially without restrictions?

What hardware do I need to run Gemma 4 locally?

How does Gemma 4 compare to ChatGPT or Claude?

Which Gemma 4 model should I choose?

Does Gemma 4 support fine-tuning?

What are the main limitations of Gemma 4?

Is Gemma 4 free to use?

Can Gemma 4 run offline completely?

How quickly was Gemma 4 adopted after launch?

Final Verdict

Google Gemma 4 represents a watershed moment for open-source AI. By combining Gemini 3’s advanced research with true Apache 2.0 licensing, Google has created the most commercially viable open model family to date. The 31B model’s #3 global ranking while outperforming models 20 times larger proves that efficiency can triumph over brute computational force.

For developers and businesses seeking powerful AI without vendor lock-in, Gemma 4 is the clear choice. Its multimodal capabilities, strong reasoning performance, and comprehensive hardware support make it suitable for everything from mobile applications to enterprise deployment.

Buy it if you need production-ready open models with commercial freedom and strong technical performance. The combination of capability and licensing makes Gemma 4 a compelling alternative to proprietary solutions.

Wait if you require the absolute cutting edge in conversational AI or work primarily with specialized domains not well-represented in the training data. Proprietary models still hold advantages in certain niches, though that gap is rapidly closing.

Gemma 4 isn’t just another model release—it’s a declaration that open-source AI has reached commercial maturity. The intelligence-per-parameter revolution starts here.