For years, the dream was simple: a full-power AI model running entirely on your phone, no cloud required, no subscription, no data leaving your device. The research existed — Microsoft’s BitNet papers, dozens of arxiv preprints, endless speculation on r/LocalLLaMA. But nobody had shipped it commercially. Until March 31, 2026, when PrismML — a Caltech-incubated startup that had been invisible until yesterday — emerged from stealth with something the AI industry has been promising and failing to deliver for three years: the world’s first commercially viable true 1-bit large language model. The Bonsai 8B fits in 1.15GB, runs 8x faster than its full-precision equivalent, and works on hardware you already own.

This isn’t a research paper. It’s a downloadable model with an Apache 2.0 license, GGUF support for llama.cpp, native Apple MLX integration, and benchmarks that put it on par with Llama 3 8B — at 1/14th the memory footprint. If those numbers hold up under independent scrutiny, the local AI space just changed.

Rating: 8.4/10 ⭐⭐⭐⭐

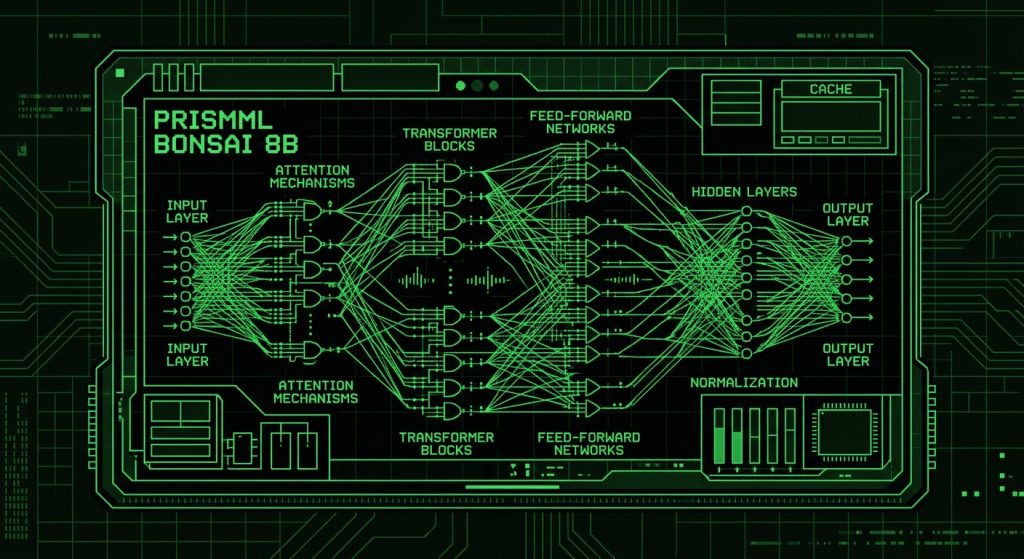

What Is PrismML Bonsai 8B?

PrismML Bonsai 8B is an 8.2-billion parameter large language model where every single weight — embeddings, attention layers, MLP layers, the LM head — operates at 1-bit precision. No higher-precision “escape hatches” anywhere in the network. Every calculation reduces to a binary decision: yes or no, +1 or -1. The result is a model that requires just 1.15GB of RAM, compared to the ~16GB required by a standard FP16 8B model.

PrismML was founded by researchers with Caltech backgrounds and trained Bonsai 8B on Google v4 TPUs. The model launched on March 31, 2026, alongside smaller siblings — Bonsai 4B (0.5GB) and Bonsai 1.7B (0.24GB) — all under the Apache 2.0 license, which permits full commercial use. Models are available on Hugging Face at huggingface.co/prism-ml and via the official demo repo at github.com/PrismML-Eng/Bonsai-demo. Investor Vinod Khosla (Khosla Ventures) has publicly backed the company, framing the launch as defining “the future of AI not by datacenter size, but by intelligence per unit of energy.”

The Story: Why 1-Bit Matters Now

To understand why Bonsai 8B is a potential paradigm shift, you have to understand what’s been stopping 1-bit LLMs from existing commercially until now.

Standard LLMs store each weight as a 16-bit or 32-bit floating-point number. That precision is why they work well — but it’s also why they’re heavy. An 8B model in FP16 needs 16GB of VRAM. Run it on consumer hardware and you’re looking at 4-bit quantization (4-8GB RAM), which degrades quality. Go further to 1-bit and, historically, models became nearly useless: the capacity to represent mathematical relationships collapsed when you had only two possible values per weight instead of 65,536.

Microsoft Research’s BitNet work (2024-2025) proved 1-bit was theoretically viable at smaller scales using ternary weights ({-1, 0, +1}, technically 1.58-bit). Their 2B model worked, but 2B parameters is too small for most real-world tasks, and the ternary approach still isn’t true binary. PrismML claims to have cracked the training recipe for a true 1-bit model at 8B parameters — the sweet spot where language models become genuinely capable at reasoning, coding, and multi-step logic.

Early independent testing confirms the headline claims largely hold up. One reviewer running the model on an M1 MacBook Air reported over 23 tokens/second on the Bonsai 4B model. On an iPhone 17 Pro Max via Apple MLX Swift, the 8B model runs at approximately 44 tokens per second. Reasoning tasks including fraction arithmetic and logic problems produced correct answers with no hallucinations noted. Code generation tests produced functional HTML and Python scripts. These aren’t just synthetic benchmarks — they’re real-world sanity checks that previous 1-bit attempts couldn’t pass.

The $10 trillion AI infrastructure buildout narrative just got a counterargument. If Bonsai 8B delivers what PrismML claims at scale, the assumption that powerful AI requires massive data center spend starts to look shaky.

Benchmark Performance

PrismML reports an average score of 70.5 across six benchmark categories, claiming competitive performance with leading FP16 8B instruct models. For context, Llama 3 8B (April 2024) scores 68.4 on MMLU (5-shot) and 62.2 on HumanEval (0-shot, pass@1). Full independent multi-benchmark validation of Bonsai 8B is still emerging as of this review — the model launched hours ago. We’ll update this table as third-party results come in.

| Benchmark / Metric | PrismML Bonsai 8B | Llama 3 8B (FP16) | Microsoft BitNet b1.58 (2B) | Mistral Small 4 (119B MoE) |

|---|---|---|---|---|

| Avg. Benchmark Score (6 categories) | 70.5 | ~69.1 (est.) | ~58.0 (2B scale) | ~78+ (larger model) |

| MMLU (language understanding) | ~68–70 (est.) | 68.4 | ~53.0 | ~75+ |

| HumanEval (code generation) | ~60–63 (est.) | 62.2 | ~40 (2B scale) | ~70+ |

| Memory footprint | 1.15 GB | ~16 GB | 0.4 GB (2B only) | 60–80 GB (full MoE) |

| Speed vs FP16 equivalent | ~8x faster | Baseline | ~6–8x faster (CPU) | N/A (different scale) |

| Energy efficiency vs FP16 | 4–5x lower | Baseline | ~5x lower (est.) | N/A |

| iPhone performance (tokens/s) | ~44 (iPhone 17 Pro Max) | Not practical | Not available for iPhone | Not practical on mobile |

Source: PrismML launch announcement, independent early testers (Reddit r/LocalLLaMA, YouTube reviews), Microsoft BitNet research papers. Estimated figures marked (est.) — full independent benchmarks pending. Table will be updated as third-party validation emerges.

Pricing

This is where Bonsai 8B breaks the mold entirely. There’s no SaaS pricing. There’s no per-token API charge. There’s no subscription.

| Option | PrismML Bonsai 8B | OpenAI GPT-5.4 (API) | Mistral Small 4 (API) | llama.cpp (local Llama 3 8B) |

|---|---|---|---|---|

| Base model access | Free (Apache 2.0) | Paid API ($2.50–$10/1M tokens) | Paid API (~$0.10/1M tokens) | Free (Meta community license) |

| Commercial use allowed? | ✅ Yes (Apache 2.0) | ✅ Yes (API terms) | ✅ Yes | ⚠️ Restricted (>700M MAU requires Meta approval) |

| Hardware required to run | Any device with 2GB+ RAM | None (cloud) | None (cloud) | 8–16GB RAM minimum |

| Privacy (data stays local?) | ✅ Fully local | ❌ Cloud only | ❌ Cloud only | ✅ Fully local |

| Ongoing cost at scale | $0 | Variable (per-token) | Variable (per-token) | Hardware cost only |

Source: PrismML launch materials, OpenAI pricing page, Mistral pricing. No API endpoint announced by PrismML as of April 1, 2026 — model is designed for local deployment only.

Key Features

1. True 1-Bit Architecture (No Escape Hatches)

This is the headline claim and what differentiates Bonsai 8B from every prior attempt at 1-bit LLMs. Every weight in the network — including embeddings and the LM head, which were kept in higher precision in earlier research — operates at true binary precision. PrismML calls this “full 1-bit” as opposed to Microsoft’s BitNet b1.58, which uses ternary weights ({-1, 0, +1}) and is technically 1.58-bit. The limitation: true 1-bit at 8B scale has never been commercially deployed before, so the long-tail edge case failure modes aren’t fully mapped yet. Expect a few months of community bug reports before the model’s reliability profile is fully understood.

2. 1.15GB Footprint — Runs on Any Device

The Bonsai 8B GGUF file in Q1_0_g128 quantization is 1.15GB. For reference, a typical 4-bit quantized Llama 3 8B model in GGUF format is 4.7GB. The full FP16 version is 16GB. This means Bonsai 8B loads comfortably on a phone with 3GB free RAM, runs via Metal on any Apple Silicon Mac (including M1 Air with 8GB total), and works on NPU-equipped Windows laptops. The limitation: you’re still running 8B parameters. For very long contexts or highly complex multi-step reasoning chains, smaller models will still hit walls that larger cloud models won’t.

3. Apple MLX Native Integration

PrismML ships a fork of mlx-swift with custom 1-bit kernels, enabling native Apple Silicon execution. Bonsai 8B on an iPhone 17 Pro Max reportedly hits 44 tokens/second — faster than many full-size models on high-end consumer GPUs. For iPad and Mac users running the model locally, this is transformative. The limitation: PrismML’s mlx-swift fork requires separate installation and isn’t yet merged into the main MLX project, adding friction for non-technical users.

4. llama.cpp GGUF Compatibility (With a Catch)

Bonsai 8B is available in GGUF format for llama.cpp, which is the de facto standard for local AI on consumer hardware. You can run it in LM Studio, Ollama (with updates), and any llama.cpp-compatible frontend. The catch: you need PrismML’s fork of llama.cpp (at github.com/PrismML-Eng/llama.cpp) to get the 1-bit kernel support for optimal performance. Standard llama.cpp should still run the model but without the speed optimizations. This fork situation will likely resolve itself over the next few months as PrismML’s changes get upstreamed.

5. Full Model Family — 1.7B to 8B

PrismML launched three models simultaneously: Bonsai 1.7B (0.24GB), Bonsai 4B (0.5GB), and Bonsai 8B (1.15GB). The 4B model is particularly interesting for embedded and IoT applications — half a gigabyte for a capable language model is genuinely novel territory. The 1.7B sits in the “smart assistant on a budget device” category. The limitation: none of these are multimodal. Text-only for now. Vision and audio capabilities were not part of the March 31 launch.

6. Apache 2.0 License — Fully Commercial

Unlike Meta’s Llama license (which restricts commercial use above 700M MAU) or Google’s Gemma license, Apache 2.0 is the gold standard of open-source permissiveness. You can use Bonsai 8B in a commercial product, modify it, redistribute it, and build SaaS on top of it without royalties or restrictions. This matters enormously for startups building on-device AI features into apps. The limitation: there’s no fine-tuning toolchain from PrismML yet — training your own 1-bit model from scratch or fine-tuning Bonsai requires custom work.

Who Is PrismML Bonsai 8B For?

Use Bonsai 8B if you:

- Build mobile or embedded AI applications where latency, storage, and battery life matter. A 1.15GB model that runs at 44 tok/s on an iPhone is a product opportunity that didn’t exist yesterday.

- Need fully private, offline AI — healthcare apps, legal document tools, anything where data cannot leave the device. Bonsai 8B gives you genuine 8B-class intelligence with zero cloud dependency.

- Are an indie developer or startup who can’t afford OpenAI API costs at scale. Free, commercial-use, runs on commodity hardware — your inference bill is $0.

- Work in resource-constrained environments — field operations, edge deployments, IoT, rural connectivity scenarios. If you can run it on a Raspberry Pi 5 (testing underway by the community), this is a breakthrough for edge AI.

- Want to experiment with local AI without a GPU rig. No VRAM required. Runs on CPU. Works on the laptop you already have.

Look elsewhere if you:

- Need frontier-level reasoning or coding performance. Bonsai 8B is competitive with Llama 3 8B — which is good, not great, for complex tasks. GPT-5.4 or Gemini 3 Deep Think it is not.

- Require multimodal capabilities. Image, audio, and video understanding are not in the March 31 release. Cloud models like Qwen3.5-Omni still dominate here.

- Need a polished consumer app. Bonsai 8B is a model, not a product. Installation still requires Git, terminal commands, or a dedicated app like Maid/LM Studio. Non-technical users will struggle without a friendlier wrapper.

- Run heavy-context enterprise workloads. Long document analysis, complex multi-agent pipelines — the 8B scale will hit limits that larger cloud models won’t.

How Bonsai 8B Compares to the Competition

| Feature | PrismML Bonsai 8B | llama.cpp + Llama 3 8B | Apple MLX + Llama 3 8B | Microsoft BitNet b1.58 (2B) |

|---|---|---|---|---|

| Parameters | 8.2B | 8B | 8B | 2B |

| Memory footprint | 1.15 GB | 4.7 GB (Q4) | 4.7 GB (Q4) | 0.4 GB |

| Precision | True 1-bit | 4-bit quantized | 4-bit quantized | 1.58-bit ternary |

| Runs on phone? | ✅ Yes (iPhone/Android) | ❌ Too large for most phones | ❌ Too large for most iPhones | ⚠️ 2B scale only, no consumer app |

| Apple Silicon optimized? | ✅ Native MLX fork | ⚠️ Metal support, not optimized for 1-bit | ✅ Native MLX | ❌ No MLX support |

| llama.cpp compatible? | ✅ (needs PrismML fork for best perf) | ✅ Native | ⚠️ Not llama.cpp (MLX separate) | ✅ Via bitnet.cpp (separate project) |

| License | Apache 2.0 (full commercial) | Meta Llama (restricted at scale) | Meta Llama (restricted at scale) | MIT / Research license |

| Commercially viable? | ✅ Full commercial | ⚠️ Partially (Meta license limits) | ⚠️ Partially (Meta license limits) | ⚠️ Research/limited commercial |

| Benchmark avg. (8B class) | 70.5 | ~69.1 (FP16 baseline) | ~67–68 (4-bit degraded) | ~58 (2B scale) |

| Fine-tuning support | ❌ Not yet | ✅ (QLoRA, GGUF adapters) | ✅ (MLX fine-tuning) | ⚠️ Research tooling only |

| Price to use | Free | Free | Free | Free |

Source: PrismML launch materials, llama.cpp documentation, Microsoft BitNet research (April 2025), community testing. Performance figures are approximate — independent third-party benchmarks for Bonsai 8B are still emerging.

For local AI on Mac, the biggest competitor is Apple MLX with standard quantized models. Bonsai 8B’s MLX fork gives you 8B-class performance in 1.15GB versus the typical 4–5GB needed for a Q4 Llama 3 8B. On Windows with CUDA, the comparison against llama.cpp is similar — PrismML’s GGUF format with their llama.cpp fork should outperform standard 4-bit models on inference speed by a significant margin. On mobile, there’s currently no real competition — Bonsai 8B is the only commercially viable option at this capability level. Mistral Small 4 is a far superior model overall, but at 119B parameters it doesn’t run locally on consumer hardware.

Controversy: What They Don’t Advertise

The “true 1-bit” marketing claim is doing heavy lifting. Microsoft’s BitNet b1.58 (technically 1.58-bit ternary) has been publicly available since April 2025 and has its own performance claims. The debate over whether BitNet’s ternary approach or PrismML’s binary approach is genuinely “better” will play out over months of community benchmarking. PrismML’s claim to be the “world’s first commercially viable 1-bit LLM” is technically defensible — BitNet’s 2B model never had an Apache 2.0 commercial license or a phone-ready deployment story — but expect pushback from Microsoft researchers who will argue 1.58-bit ternary is superior in practice.

The llama.cpp fork situation creates fragmentation risk. Requiring users to run a custom fork of llama.cpp for optimal performance means Bonsai 8B is partially stranded from the mainstream local AI ecosystem. If PrismML doesn’t upstream their 1-bit kernels quickly, the model risks being left behind as llama.cpp evolves. This has happened with other “fork-first” models before.

Independent benchmarks are still 24 hours old. PrismML’s claim of “70.5 average across six categories” competitive with “leading FP16 8B instruct models” is self-reported. The AI community has been burned by company-reported benchmarks before (see: every model launch in 2025). The early community tests are encouraging, but the full picture won’t be clear for another week or two as researchers run standardized evaluations.

No fine-tuning toolchain at launch. For researchers and developers who want to customize Bonsai 8B for specific domains, there’s no PrismML-supported fine-tuning path yet. You can fine-tune a 4-bit Llama 3 8B with QLoRA on a consumer GPU. You cannot do the equivalent with Bonsai 8B today. This limits enterprise adoption until the training tooling catches up.

Historical 1-bit LLM quality concerns on complex math. Previous 1-bit models have struggled with multi-step mathematical reasoning, even when they perform well on simpler tasks. Early testers report Bonsai 8B handles fraction arithmetic and logic problems correctly — which is genuinely surprising — but systematic math and reasoning benchmarks are pending. GSM8K and MATH scores will be the acid test.

Pros and Cons

Pros

- Genuinely revolutionary size-to-performance ratio — 8B-class performance at 1.15GB is something the local AI community has never had before.

- Runs on phones and thin laptops — the only commercially viable LLM in its performance tier that works on mobile hardware.

- Full Apache 2.0 commercial license — no usage restrictions, no royalties, no approval needed for commercial deployment.

- 8x faster inference than FP16 equivalent — on the same hardware, you’re getting meaningfully better throughput.

- Free with zero ongoing inference cost — if you’re building something that needs to scale, this eliminates your entire API budget.

- Three model sizes at launch (1.7B, 4B, 8B) — right-size your deployment from IoT edge devices up to laptop-grade hardware.

- Apple MLX native support — iPhone 17 Pro Max hits 44 tokens/second, which makes on-device AI actually usable in consumer apps.

Cons

- Requires PrismML’s llama.cpp fork for best performance — fragmentation from the standard local AI toolchain is a genuine friction point.

- No fine-tuning support at launch — can’t adapt the model for domain-specific use cases without significant custom engineering work.

- Text-only (no multimodal) — vision, audio, and video capabilities were not included in the March 31 release.

- Self-reported benchmarks only (so far) — the 70.5 average score needs third-party validation before full trust is warranted.

- Historical 1-bit weakness in complex math — GSM8K and MATH benchmark results are still pending; this is the area to watch.

Getting Started with PrismML Bonsai 8B

The fastest path to running Bonsai 8B is PrismML’s demo repository, which handles all the dependencies for you:

Step 1: Clone the Demo Repository

Open a terminal and run:

git clone https://github.com/PrismML-Eng/Bonsai-demo.git

cd Bonsai-demoStep 2: Set Your Model Size (Optional)

The 8B model is the default. To switch:

- macOS/Linux:

export BONSAI_MODEL=8B - Windows (PowerShell):

$env:BONSAI_MODEL = "8B"

Step 3: Run the Setup Script

This installs dependencies, downloads the model (1.15GB), and fetches the PrismML llama.cpp binaries:

- macOS/Linux:

./setup.sh - Windows:

Set-ExecutionPolicy -Scope Process -ExecutionPolicy Bypass .\setup.ps1

System requirements: 8GB RAM (16GB recommended), modern CPU with AVX2. NVIDIA GPU (CUDA) and Apple Silicon (Metal) both supported for acceleration.

Step 4: Run Your First Prompt

BONSAI_MODEL=8B ./scripts/run_llama.sh -p "Explain quantum computing in plain English"On Windows: equivalent PowerShell command is in the demo repo README.

Step 5: Explore the Model Family and Integrations

- Hugging Face: Browse huggingface.co/prism-ml for all three model sizes in both GGUF and MLX formats.

- Apple MLX (iPhone/iPad/Mac): Download

prism-ml/Bonsai-8B-mlx-1bitfrom Hugging Face and follow PrismML’s MLX Swift setup guide. - Android: Use the Maid app (F-Droid) to load the GGUF file, or compile PrismML’s llama.cpp fork via Termux for terminal access.

- LM Studio / Open WebUI: Load the GGUF file manually — note that UI-level 1-bit optimizations require PrismML’s fork to be active in the backend.

Frequently Asked Questions

What is PrismML Bonsai 8B?

PrismML Bonsai 8B is an 8.2-billion parameter large language model that uses true 1-bit weight quantization across every layer — including embeddings, attention, MLP, and the LM head. It requires only 1.15GB of RAM and runs on consumer hardware including phones, laptops, and edge devices. Launched March 31, 2026 by PrismML (a Caltech-backed startup), it’s available free under the Apache 2.0 license from Hugging Face.

Is PrismML Bonsai 8B free to use commercially?

Yes. Bonsai 8B is released under the Apache 2.0 license, which allows full commercial use, modification, and redistribution without royalties or restrictions. This is more permissive than Meta’s Llama license (which restricts use above 700M monthly active users) and makes Bonsai 8B uniquely attractive for startups and enterprises building AI products.

How does Bonsai 8B compare to Llama 3 8B?

Bonsai 8B reports an average benchmark score of 70.5 across six categories, which is competitive with Llama 3 8B (MMLU: 68.4, HumanEval: 62.2). The key differences: Bonsai 8B requires only 1.15GB RAM versus Llama 3 8B’s 16GB at FP16 (or ~4.7GB at Q4 quantization), runs 8x faster on the same hardware, and carries a more permissive Apache 2.0 license. Full third-party benchmark comparisons are still emerging as of April 1, 2026.

Can I run Bonsai 8B on an iPhone?

Yes — this is one of Bonsai 8B’s headline capabilities. PrismML provides a native MLX Swift fork with custom 1-bit kernels optimized for Apple Silicon. iPhone 17 Pro Max reportedly achieves approximately 44 tokens per second. PrismML has also partnered with the “Locally AI” app for a more consumer-friendly iPhone deployment experience.

What hardware do I need to run Bonsai 8B?

Any device with at least 2–3GB of free RAM can run Bonsai 8B — 8GB+ is recommended for smooth performance. It supports NVIDIA GPUs via CUDA, Apple Silicon via Metal/MLX, and standard CPU execution (AVX2 recommended for best speed). This includes MacBooks with 8GB RAM, Windows laptops, Android phones via Termux, and iPhones via the MLX Swift fork.

How is Bonsai 8B different from Microsoft’s BitNet b1.58?

Microsoft BitNet b1.58 uses ternary weights ({-1, 0, +1}) — technically 1.58-bit, not true 1-bit. BitNet’s flagship model is 2B parameters with 0.4GB footprint. PrismML Bonsai 8B uses true binary weights across all layers at 8B parameters (1.15GB), ships with an Apache 2.0 commercial license, and supports iPhone deployment via MLX — none of which BitNet offers. Both approaches are pioneering; BitNet has more published research; Bonsai 8B has more immediate commercial deployability.

What are the limitations of Bonsai 8B?

Key limitations as of launch: text-only (no image/audio/video input), no fine-tuning toolchain, requires PrismML’s llama.cpp fork for optimized performance, benchmarks are still self-reported and pending full third-party validation, and complex mathematical reasoning has historically been a weakness for 1-bit models (systematic math benchmarks pending). It also won’t match frontier models like GPT-5.4 or Gemini 3 Deep Think for demanding tasks.

Is Bonsai 8B better than using llama.cpp with a standard quantized model?

For mobile and RAM-constrained devices: definitively yes. Bonsai 8B at 1.15GB versus a Q4 Llama 3 8B at 4.7GB is the difference between “runs on a phone” and “doesn’t.” For desktop users with 16GB+ RAM, a standard Q4 model currently offers more ecosystem maturity (LM Studio, Ollama, Jan, etc.), better fine-tuning support via QLoRA, and broader compatibility. The ecosystem gap will likely close over 2026 as PrismML’s tooling matures.

How do I install PrismML Bonsai 8B?

Clone PrismML’s demo repository: git clone https://github.com/PrismML-Eng/Bonsai-demo.git && cd Bonsai-demo. Then run ./setup.sh (macOS/Linux) or ./setup.ps1 (Windows PowerShell). The script downloads the model and installs the PrismML llama.cpp fork automatically. Full setup takes under 5 minutes on a fast internet connection. Models are also downloadable manually from Hugging Face (prism-ml/Bonsai-8B-gguf).

Is PrismML Bonsai 8B worth it in 2026?

For specific use cases — mobile AI development, fully private offline deployments, zero-cost inference at scale, or edge/IoT AI — Bonsai 8B is a clear yes and genuinely changes what’s possible on consumer hardware. For general-purpose AI work where you need best-in-class reasoning, multimodal capabilities, or a polished UX, cloud models like GPT-5.4, Gemini 3 Deep Think, or Mistral Small 4 are still the better choice. Bonsai 8B’s value is specifically in unlocking AI for contexts where cloud models can’t go.

Final Verdict

PrismML Bonsai 8B is the most significant development in local AI since llama.cpp made running LLMs on consumer hardware possible in 2023. The combination of true 1-bit architecture, 8B parameter scale, 1.15GB footprint, phone-grade performance (44 tok/s on iPhone 17 Pro Max), Apache 2.0 commercial license, and competitive benchmark performance represents something that has genuinely never existed before. The researchers who said 1-bit LLMs could match full-precision models have been proven right — and PrismML is the team that turned the theory into a deployable product.

The caveats are real: benchmarks need third-party validation, the llama.cpp fork creates ecosystem friction, fine-tuning support is missing, and the model is text-only. This is a Day 1 launch, and Day 1 products have rough edges. But the core architecture works, early testers confirm the efficiency claims, and the commercial licensing is airtight. For anyone building mobile AI apps, private enterprise deployments, or edge AI systems, Bonsai 8B just became the first thing you should evaluate.

Download it today if you’re building anything on consumer hardware or mobile: huggingface.co/prism-ml or PrismML demo repo. Wait and watch if you need frontier reasoning performance or multimodal capabilities — the cloud is still the better call for demanding tasks. But watch closely: if the fine-tuning toolchain ships and the benchmark validation holds up, this model’s score goes higher.

Rating: 8.4/10 — a breakthrough on hardware accessibility that changes the ceiling for local AI, held back only by early-stage ecosystem gaps that will close over 2026.

Disclosure: ComputerTech has no commercial relationship with PrismML. All performance data cited from PrismML launch materials and early independent community testing as of April 1, 2026. We will update this review as third-party benchmarks emerge.